Introduction

Oceans can be one of the most relaxing phenomena that nature provides us. Hearing the crashing waves, seeing small water ripples and light refraction through the water surface can take hours of my day when sitting at a beach. This is one of the reasons why this project came to life.

As a graphics programmer, I often ask myself how real life scenarios would translate to a digital implementation. Usually I have a rough idea, but oceans seemed so complex, non-uniform and variable dependent that I decided to ask google instead. This led to a rabbit hole of papers regarding Fourier Transforms, Euler’s formula and scary math which I will be elaborating on in this blogpost.

So if you’ve ever looked at an ocean and wondered how films such as Titanic, AAA games such as Horizon Forbidden West and Sea of Thieves recreated it, then this blogpost is for you.

Table of Contents

- Wave Simulation: JONSWAP & the FFT Pipeline

- Rendering & Shading

- Foam

- Wave Cascades: Multi Scale Detail

- Skybox & Environment

- Major Struggles & Lessons Learned

- Results & Next Steps

Before we start let me show you what we will be building.

Calm open ocean

Ocean with large swells and prominent wind direction

Understanding the basics

How does a sine wave work?

The ocean wave implementation is heavily based on using multiple sine waves to get interesting displacements. Sine waves are oscillators, which means they generate a repetitive wave signal that stays within its amplitude. Three important terms regarding a wave is its amplitude, its wavelength and its frequency. Amplitude is the peak of the wave, the highest value that the signal can reach. Wavelength is the distance between two of these peaks, you can imagine this like a spring. A spring that is compressed would represent a short wavelength while a spring that is stretched out would represent a long wavelength. Frequency is how often a signal repeats within a second. A short wavelength correlates to a high frequency, a long wavelength correlates to a low frequency. This ties together with ocean rendering because we can use this wavelength to shape the waves of our ocean, how we do this will be discussed in this article.What are complex numbers?

A complex number is simply two numbers packed into one. It has a real part and an imaginary part. Its form is \(c = a + bi\) You can think of it like a 2D coordinate. Instead of writing \(p = (3,4)\), you'd write \(p = 3 + 4i\). The \(i\) is the imaginary part of the number, which lives on the axis perpendicular to the real number line. When any number is multiplied by the imaginary \(i\), it is essentially "rotated" by 90° counter clockwise. This rotation property is what lets Euler's formula describe circular motion. Interesting note, multiplying a number by \(i\) twice, brings it to -1, which leads to a whole other discussion on why \(i = \sqrt{-1}\). But that is out of scope for this blogpost.What is Euler's formula?

Euler's formula states that any point on a unit circle can be written as: \[e^{i\theta} = \cos\theta + i\sin\theta\] This tells us that if you take e to the power of an imaginary number, you would get a point on a circle. Here the \(cos\theta\) is the x coordinate, and the \(sin\theta\) is the y coordinate. This is very useful for our ocean simulation since as theta increases over time, the point spins around the circle. This spinning around the circle causes the x and y values to oscillate up and down which drive the displacement of the ocean over time. Euler's formula is a compact way to represent the oscillation of the sin and cosine components packed into one expression.How does a compute shader work?

A compute shader is a shader that runs directly on the GPU, outside of the normal rendering pipeline. Unlike vertex and pixel shaders, it has no fixed role. Instead, it runs a large grid of threads in parallel, allowing the GPU to do many calculations at the same time. A CPU has a small number of powerful cores built for sequential logic. A GPU has thousands of smaller cores built to do the same operation on many pieces of data at once. This makes the GPU much better suited for tasks like the FFT, where the same calculation needs to happen on every point of a texture every frame. The developer defines the size of the thread grid themselves. Each thread knows its own position in the grid via SV_DispatchThreadID, so it knows exactly which point on the ocean it is responsible for. This way every point on the ocean plane gets calculated in parallel.

[numthreads(8, 8, 1)]

void CSMain(uint3 id : SV_DispatchThreadID)

{

// id.xy is this thread's position in the grid

// each thread computes the wave displacement for one point

OutputTexture[id.xy] = ComputeWaveDisplacement(id.xy);

}

Chapter 1 Wave Simulation: JONSWAP & the FFT Pipeline

The ocean surface is made up of thousands of waves, all travelling in different directions with different sizes and speeds. To simulate this, we model the ocean as a sum of sine waves, each with their own frequency, direction and amplitude. The question then becomes: how do we decide how big each of those waves should be?

This is where oceanographers come in. Over decades, researchers measured real ocean surfaces using buoys, photographs and radar measurements to figure out exactly how wave energy is distributed across different frequencies in a fully developed ocean. A fully developed ocean simply means the ocean has had enough time and distance to reach an equilibrium with the wind blowing over it. The result of this research is a spectrum function, which tells us how much energy each wave frequency should have given a set of wind conditions.

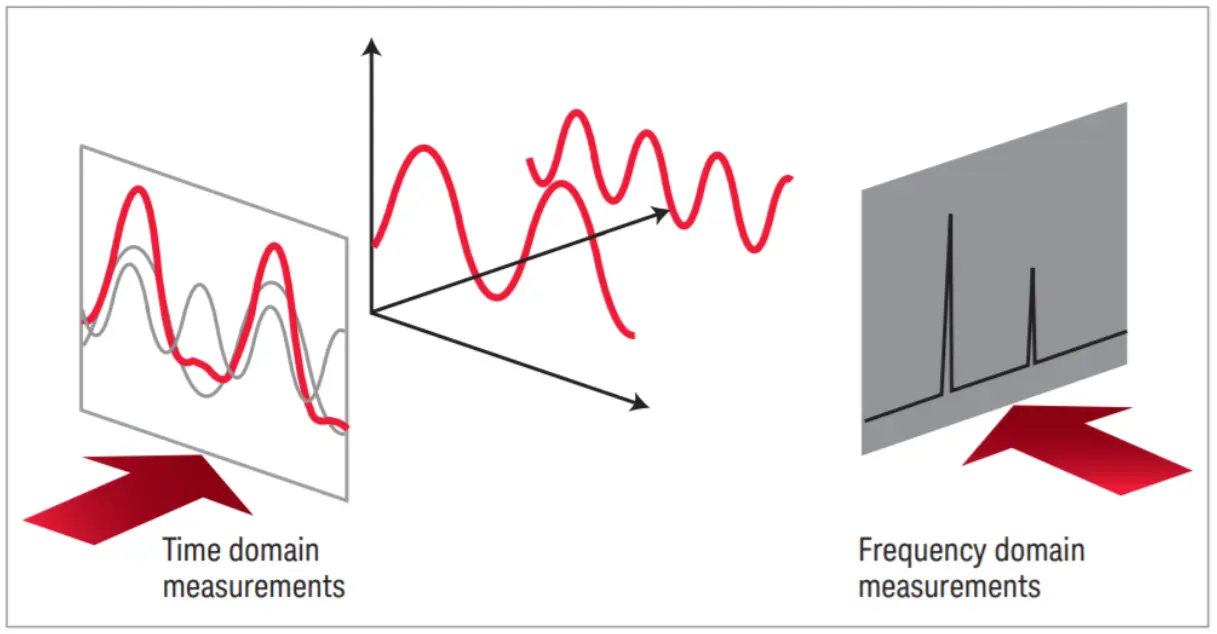

Now, evaluating thousands of sine waves individually for every point on the ocean every frame would be far too slow even for a modern GPU. This is where the FFT comes in. Before we dive into how FFT works, we need to understand the two relevant domains. The Spatial Domain and the Frequency Domain.

The spatial domain is our usual graph that has a value Y on the vertical axis, and a constantly increasing value X usually representing time or position, on the horizontal axis. The frequency domain exposes frequency on the horizontal axis and amplitude on the vertical axis. This produces a graph that visualizes the link between frequencies and their amplitudes.

FFT works in the frequency domain, where each point represents a wave frequency rather than a world position. The FFT algorithm then converts the entire frequency domain into real world displacements (which live in the spatial domain) in one efficient pass. The beautiful thing about this, is that it’s a lossless operation. We can convert our data between domains without losing any information. This is neat since we can remove certain frequencies from the equation, convert the data back to the spatial domain and have the exact same wave minus the removed frequency. You can imagine this like having a musical chord, say C Major 7. This chord contains the notes C, E, G and B. Using the FFT, you would be able to see every note that this chord signal contains, remove the B for example and convert it back to the spatial domain. The result would be a simple C Major chord which has the notes C, E and G.

With this understanding of the FFT, we can now look at how Tessendorf uses it to build the ocean spectrum.

In his paper, Jerry Tessendorf describes the Phillips Spectrum as the model for defining wave energy. After implementing it I found it too limiting since its only parameters were wind speed and direction. So I switched to the JONSWAP spectrum, a more widely adopted model that gives much more creative freedom. With JONSWAP you can control things like how many waves follow the wind direction, the choppiness of the waves, the distance over which the wind affects the surface, and the sharpness of the spectrum peak. This lets you model anything from a calm open ocean to a stormy sea with large aggressive swells.

1.1 The Setup

The ocean simulation is driven by a statistical wave spectrum. Rather than simulating individual water particles, the sea surface is represented as a collection of many sinusoidal waves.

To simulate the ocean, the general idea is that we want to start by generating the initial representation of the heightfield in 2D space. We do this by using the wave spectrum in combination with a gaussian pseudo random number generator. The gaussian random number is used to ensure that each wave component starts at a different point in its cycle. This way two oceans with the same parameters will still look different from each other. This random number becomes the $\xi_r + i\xi_i$ term in the H₀ formula, which we will see in section 1.2.

Tessendorf expresses the ocean height field as a sum of complex wave contributions across all frequencies. We represent the ocean surface using the formula:

\[h(\mathbf{x}, t) = \sum_{\mathbf{k}} \tilde{h}(\mathbf{k}, t) \exp(i\mathbf{k} \cdot \mathbf{x})\]$t$ - time

$\mathbf{x}$ - horizontal position on the ocean surface

$\mathbf{k}$ - a 2D wave vector with components $\mathbf{k} = (k_x, k_z)$

$k_x = \frac{2\pi n}{L_x}$

$k_z = \frac{2\pi m}{L_z}$

$n, m$ - integers in the range [$-N/2 \leq n < N/2$] and [$-M/2 \leq m < M/2$].

$\tilde{h}(\mathbf{k}, t)$ - complex amplitude encoding the amplitude and **phase* of the wave at frequency $\mathbf{k}$ and time $t$

$N, M$ - the resolution of the FFT grid

What is the phase of a wave?

The phase is the position of a wave within its cycle at any point in time. It is measured in radians, a phase value of 0 means the wave is at its peak, while a phase value of $\frac{\pi}{2}$, means that the wave is halfway between the peak and zero.This heightfield is the result of the FFT converting the initial spectrum from the frequency domain to the spatial domain. This will be discussed in section 1.5. The complex amplitude $\tilde{h}(\mathbf{k}, t)$ is the heart of this formula. It is what we need to construct for every wave vector $\mathbf{k}$ on our grid. To do this we need two things: the JONSWAP spectrum, which tells us how much energy each wave frequency should carry, and the dispersion relation, which tells us how fast each wave travels. Section 1.3.

The dispersion relation allows us to propagate this heightfield forward in time, animating the waves. This will need to be recalculated every frame to update the heightfield accordingly. The dispersion relation defines the relationship between angular frequency $\omega$ and the wave number $k$.

In deep water, its formula is defined like this:

$\omega$ - Angular frequency. How fast the wave oscillates in time (radians/sec)

$g$ - Gravitational acceleration (9.81 m/s²)

$k$ - The Wavenumber. Waves per meter: $k = \frac{2\pi}{\lambda} $

What is angular frequency?

Regular frequency describes how many full cycles happen in a second. For example: A wave that completes 3 cycles in a second has $f = 3$Hz. Angular frequency comes from thinking about this cycle rotating around a unit circle rather than oscillating up and down. A full rotation around a unit circle is $2\pi$. Angular frequency is simply the regular frequency multiplied by $2\pi$. $$\omega = 2\pi f$$For our implementation, we will be using the form that encodes a finite depth:

\[\omega = \sqrt{gk\tanh(kD)}\]$D$ - Ocean Depth. Large values for D simplifies back to the base form while smaller values for D reduce the wave speed.

The dispersion relation formula is an approximation. There are many different relationships you can choose to fit your ocean.

With the general idea understood, we can now look at how the wave spectrum is constructed.

1.2 The Wave Spectrum

The wave spectrum is essentially a function that describes how the wave energy is distributed across combinations of angular frequencies $\omega$ and wind directions $\theta$.

The JONSWAP spectrum formula looks like this:

\[S_{\text{JONSWAP}}(\omega) = \text{scale} \cdot \phi_{TMA}(\omega) \cdot \frac{\alpha g^2}{\omega^5} \exp\left(-\frac{5}{4}\left(\frac{\omega_p}{\omega}\right)^4\right) \gamma^{\exp\left(-\frac{(\omega - \omega_p)^2}{2\sigma^2\omega_p^2}\right)}\]$\alpha$ - Energy scale, controls the overall wave height

$g$ - Gravitational acceleration (9.81 m/s²)

$\omega$ - Angular frequency of the wave being evaluated

$\omega_p$ - Peak frequency, the frequency with the most energy

$\gamma$ - Peak enhancement factor, controls the sharpness of the spectrum peak

$\sigma$ - Width parameter, 0.07 when $\omega \leq \omega_p$ and 0.09 when $\omega > \omega_p$

$\phi_{TMA}$ - TMA shallow water correction factor. Reduces the wave speed at shallow depths.

Before diving into each component of the JONSWAP, lets define a struct that will hold all the parameters that we will be populating.

struct JonswapParameters

{

float scale = 0.0f; // Used to scale the Spectrum [1.0f, 5.0f]

float spreadBlend = 0.0f; // Used to blend between agitated water motion, and windDirection [0.0f, 1.0f]

float swell = 0.0f; // Influences wave choppiness, the bigger the swell, the longer the wave length [0.0f, 1.0f]

float gamma = 0.0f; // Defines the Spectrum Peak [0.0f, 7.0f]

float shortWavesFade = 0.0f; // [0.0f, 1.0f]

float windDirection = 0.0f; // [0.0f, 360.0f]

float fetch = 0.0f; // Distance over which Wind impacts Wave Formation [0.0f, 10000.0f]

float windSpeed = 0.0f; // [0.0f, 100.0f]

// These values get calculated using the metrics above.

float angle = 0.0f;

float alpha = 0.0f;

float peakOmega = 0.0f;

}m_jonswapParams;

In code, calculating the JONSWAP spectrum looks like this:

float Ocean::JONSWAP(float omega)

{

float sigma = (omega <= m_jonswapParams.peakOmega) ? 0.07f : 0.09f; // width parameter

float r = exp(-(omega - m_jonswapParams.peakOmega) * (omega - m_jonswapParams.peakOmega) / 2.0f / sigma / sigma / m_jonswapParams.peakOmega / m_jonswapParams.peakOmega); // peak enhancement

float g = 9.81f; // gravity

float oneOverOmega = 1.0f / (omega + 1e-6f);

float peakOmegaOverOmega = m_jonswapParams.peakOmega / omega;

return m_jonswapParams.scale * TMACorrection(omega) * m_jonswapParams.alpha * g * g * oneOverOmega * oneOverOmega * oneOverOmega * oneOverOmega * oneOverOmega

* exp(-1.25f * peakOmegaOverOmega * peakOmegaOverOmega * peakOmegaOverOmega * peakOmegaOverOmega) * pow(abs(m_jonswapParams.gamma), r);

}

The TMA (Texel Marsen Arsloe) correction is used for the non directional component of the wave spectrum. This means that it does not care about the direction the waves are moving, instead the TMA functions on the depth of the ocean. In shallow waters, the seabed starts to intervene with the waves. The waves slow down, their shape changes and their energy distribution across waves get shifted. TMA accounts for this and adjusts the JONSWAP spectrum by multiplying the spectrum with a depth dependent factor.

float TMACorrection(float w)

{

float omegaH = omega * sqrt(OCEAN_DEPTH / 9.81f);

if (omegaH <= 1.0f)

return 0.5f * omegaH * omegaH;

if (omegaH < 2.0f)

return 1.0f - 0.5f * (2.0f - omegaH) * (2.0f - omegaH);

return 1.0f;

}

Before we continue, lets populate our JonswapParameters. I recommend you play with the values to meet your desired artistic vision.

We do need to calculate 3 of the variables: The angle, the alpha and the peakOmega.

m_jonswapParams.angle = m_jonswapParams.windDirection / 180.0f * PI;

m_jonswapParams.alpha = JonswapAlpha(m_jonswapParams.fetch, m_jonswapParams.windSpeed);

m_jonswapParams.peakOmega = JonswapPeakFequency(m_jonswapParams.fetch, m_jonswapParams.windSpeed);

float Ocean::JonswapAlpha(float fetch, float windSpeed)

{

return 0.076f * pow(9.81f * fetch / windSpeed / windSpeed, - 0.22f);

}

float Ocean::JonswapPeakFequency(float fetch, float windSpeed)

{

return 22.0f * pow(windSpeed * fetch / 9.81f / 9.81f, -0.33f);

}

Now that we have the wave spectrum, we can look at generating the initial heightfield.

1.3 Initial Spectrum Generation: H₀

We compute the initial spectrum once, my implementation does this on the CPU but its best done on the GPU since it can make great use of parallel computing.

The formula Tessendorf proposes in his paper is:

\[\tilde{h}_0(\mathbf{k}) = \frac{1}{\sqrt{2}}(\xi_r + i\xi_i)\sqrt{P_h(\mathbf{k})}\]$\tilde{h}_0(\mathbf{k})$ - The initial complex amplitude for wave vector $\mathbf{k}$. Encodes the amplitude and phase of one wave component.

$\mathbf{k}$ - A 2D wave vector representing the frequency and direction of a wave component.

$\xi_r$ - A Gaussian random number for the real part.

$\xi_i$ - A Gaussian random number for the imaginary part.

$P_h(\mathbf{k})$ - The wave spectrum energy at wave vector $\mathbf{k}$. In our implementation this is the JONSWAP spectrum.

$\frac{1}{\sqrt{2}}$ - A normalisation factor that ensures the correct distribution of wave amplitudes.

This data lives in the frequency domain.

We generate a complex number that encodes the amplitude and phase for every wave vector k. We store the resulting data in a R16G16B16A16_FLOAT texture.

Lets look at some code.

void Ocean::GenerateH0(std::shared_ptr<CommandList> commandList, const UINT cascade) {

float deltaK = 2.0f * PI / patchSize;

float highestK = (OCEAN_SUBRES / 2.0f) * deltaK; // nyquist limit

// stored in heap to avoid stack overflow

std::vector<std::complex<float>> H0(OCEAN_SUBRES * OCEAN_SUBRES, { 0,0 });

std::vector<std::complex<float>> H0Conj(OCEAN_SUBRES * OCEAN_SUBRES, { 0,0 });

const float lowCutoff = cascade == 0 ? 0.001f : (OCEAN_SUBRES * PI / m_oceanPatchSizes[cascade - 1]); // nyquist limit of previous cascade;

const float highCutoff = highestK;

for (int m = 0; m < OCEAN_SUBRES; m++) {

for (int n = 0; n < OCEAN_SUBRES; n++) {

// Get wave vector for this frequency

float kx = (n - OCEAN_SUBRES / 2.0f) * deltaK;

float ky = (m - OCEAN_SUBRES / 2.0f) * deltaK;

float k(sqrtf(kx * kx + ky * ky));

if (k >= lowCutoff && k <= highCutoff)

{

float kAngle = atan2(ky, kx);

float omega = DispersionRelation(k);

float dOmegadk = DispersionDerivative(k);

float spectrum = JONSWAP(omega) * DirectionSpectrum(kAngle, omega) * ShortWavesFade(k);

// Generate two independent gaussian random numbers

float xiR = GaussianRandom();

float xiI = GaussianRandom();

float amplitude = sqrtf(2.0f * spectrum * fabsf(dOmegadk) / k * deltaK * deltaK);

H0[m * OCEAN_SUBRES + n] = std::complex<float>(xiR * amplitude, xiI * amplitude);

}

}

}

// Needs its own loop since the conjugate needs all data of H0 to be valid

for (int m = 0; m < OCEAN_SUBRES; m++) {

for (int n = 0; n < OCEAN_SUBRES; n++) {

int m_minus = (OCEAN_SUBRES - m) % OCEAN_SUBRES;

int n_minus = (OCEAN_SUBRES - n) % OCEAN_SUBRES;

H0Conj[m * OCEAN_SUBRES + n] = std::conj(H0[m_minus * OCEAN_SUBRES + n_minus]);

}

}

std::vector<uint16_t> combinedData(OCEAN_SUBRES * OCEAN_SUBRES * 4);

for (int m = 0; m < OCEAN_SUBRES; m++) {

for (int n = 0; n < OCEAN_SUBRES; n++) {

int index = (m * OCEAN_SUBRES + n) * 4;

combinedData[index + 0] = FloatToHalf(H0[m * OCEAN_SUBRES + n].real()); // R: H0 real

combinedData[index + 1] = FloatToHalf(H0[m * OCEAN_SUBRES + n].imag()); // G: H0 imaginary

combinedData[index + 2] = FloatToHalf(H0Conj[m * OCEAN_SUBRES + n].real()); // B: H0_conj real

combinedData[index + 3] = FloatToHalf(H0Conj[m * OCEAN_SUBRES + n].imag()); // A: H0_conj imaginary

}

}

D3D12_SUBRESOURCE_DATA subData = {};

subData.pData = combinedData.data();

subData.RowPitch = OCEAN_SUBRES * 4 * sizeof(uint16_t); // 4 channels * 2 bytes

subData.SlicePitch = subData.RowPitch * OCEAN_SUBRES;

commandList->CopyTextureSubresource(m_oceanCascades[cascade].H0Texture, 0, 1, &subData);

}

Lets dissect this.

deltaK is the spacing between frequency samples. Since our patch covers patchSize metres in the real world, dividing $2\pi$ by it gives us the step size in frequency space.

highestK is the Nyquist limit, the highest frequency our grid can represent before aliasing occurs.

lowCutoff and highCutoff can be ignored for now, these will be explained in Chapter 4.

The double for loop

For each grid position $(n, m)$ we compute the wave vector components kx and ky. Subtracting OCEAN_SUBRES / 2.0f centers the grid around zero so we cover both positive and negative frequencies, representing waves travelling in all directions.

k is the magnitude of the wave vector, the wavenumber.

Computing the spectrum value

For each wave vector we compute three things.

kAngle is the direction the wave is travelling.

omega is the angular frequency from the dispersion relation.

The full spectrum value is then the product of three functions. JONSWAP gives the total energy at this frequency.

DirectionSpectrum distributes that energy across directions based on kAngle.

ShortWavesFade damps out very high frequencies to prevent aliasing artifacts.

How does directional spreading work?

The JONSWAP spectrum $S(\omega)$ only tells us how much energy a wave of a certain frequency should have. It says nothing about which direction that energy travels. The directional spreading function $D(\omega, \theta)$ solves this by distributing the energy across directions. Multiplying them together gives us the full directional spectrum: $$S(\omega, \theta) = S(\omega) \cdot D(\omega, \theta)$$ The spreading function we use is based on the Longuet-Higgins form, which most empirical models share: $$D(\omega, \theta) = Q(s) \cdot |\cos(\theta/2)|^{2s}$$ The parameter $s$ controls how concentrated the energy is around the wind direction. A large $s$ means waves are tightly focused along the wind. A small $s$ means energy spreads out in all directions. $Q(s)$ is a normalisation factor that ensures the total energy across all directions sums to 1. In code,Cosine2s is this function and NormalizationFactor is $Q(s)$, computed using a polynomial approximation rather than the exact Euler gamma function for performance reasons. Computing $s$: the Hasselmann model

We use the Hasselmann empirical model to compute $s$ based on how far the current frequency is from the peak frequency $\omega_p$:

float SpreadPower(float omega, float peakOmega)

{

if (omega > peakOmega)

return 9.77f * pow(abs(omega / peakOmega), -2.5f);

else

return 6.97f * pow(abs(omega / peakOmega), 5.0f);

}

9.77 and 6.97 come directly from Horvath's paper. Frequencies near the peak get a high $s$ value, meaning they are focused along the wind. Frequencies far above the peak get a low $s$, spreading more freely in all directions. The swell parameter

On top of the Hasselmann base value, we add a swell contribution: $$s_\xi = 16 \tanh\left(\frac{\omega_p}{\omega}\right)\xi^2$$ Where $\xi$ is the

swell parameter. Increasing swell adds to $s$, making waves more elongated and parallel, simulating waves that have travelled from a distant storm rather than being generated by local wind. Blending with spreadBlend

The final spreading function blends between two models based on the

spreadBlend parameter:

return lerp(

2.0f / PI * cos(theta) * cos(theta), // simple cosine squared, no directionality

Cosine2s(theta - angle, s), // full Hasselmann + swell spreading

spreadBlend

);

spreadBlend of 0 gives a simple spreading where all wavelengths spread equally regardless of frequency. A spreadBlend of 1 gives the full empirical Hasselmann model with swell. Values in between blend the two, giving you creative control over how directional the ocean looks. Computing the amplitude and storing H₀ Two independent Gaussian random numbers give each wave component a unique random phase. The amplitude formula converts the continuous spectrum density into a discrete amplitude value for this specific grid cell, accounting for the cell size deltaK * deltaK. Multiplying the random numbers by the amplitude gives us $\tilde{h}_0(\mathbf{k})$, stored as a unique complex number.

The Conjugate

The conjugate is simply the sign mirror of a complex number. For example the conjugate of $z = a + bi$ is $z = a - bi$. This conjugate is needed since the FFT takes complex numbers as input and produces a complex number as an output too. Our heightfield needs to use real numbers, so using the conjugate we can cancel out the imaginary part of the FFT output, leaving us with only real values. for example:

Texture Packing

Both H0 and its conjugate are packed into a single RGBA texture. The real and imaginary parts of H0 go into the R and G channels, the conjugate goes into B and A. The values are converted to 16 bit half precision floats to save memory. This texture is then uploaded to the GPU where the compute shader will read it every frame to propagate the waves forward in time.

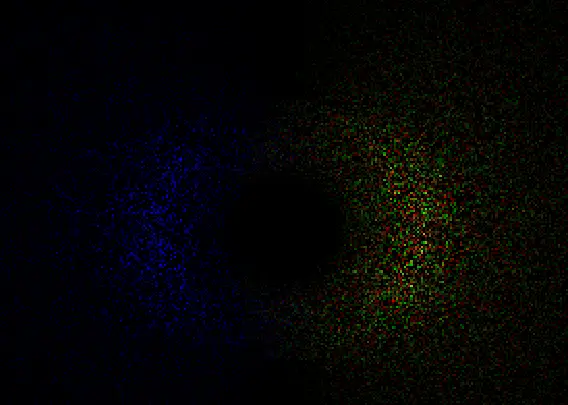

This function provides us with the initial spectrum texture. You can visualize this texture in your preferred graphics debugger, I’m using RenderDoc. You will need to change the color range to a very small value to see the colors clearly.

Your version might look slightly less colorful, mine is using multiple frequency bands which will be covered in Chapter 4.

Next we will look at how the FFT transforms this frequency domain data into real world wave displacements

1.4 Time Evolution: Animating the Waves

The next step now that we have the initial spectrum, is to bring our static data to life by animating it over time. Tessendorf provides us with the following formula:

\[\tilde{h}(\mathbf{k}, t) = \tilde{h}_0(\mathbf{k})\,e^{i\omega(k)t} + \tilde{h}_0^*(-\mathbf{k})\,e^{-i\omega(k)t}\]Every frame, we take our initial spectrum $\tilde{h}_0(\mathbf{k})$ and multiply it by $e^{i\omega t}$. From Euler’s formula we know that this advances the phase of each wave component forward in time at its own speed, determined by the dispersion relation. The second term $\tilde{h}_0^*(-\mathbf{k})\,e^{-i\omega(k)t}$ is the conjugate we discussed earlier, ensuring the output remains a real value.

One important detail is that we use a rounded version of $\omega$ when computing the time evolution. This ensures that every wave component completes full cycles within a fixed time period, so the ocean animation loops seamlessly without any visible discontinuity.

So we have the initial spectrum and its conjugate, the last missing term is the dispersion relation and the $e^{i\omega t}$ which we will calculate now.

Since we want to loop the animation, we need to introduce a new variable: REPEAT_TIME. Using this variable we can control the amount of time it takes for the simulation to loop back to its original state. I’m using a value of 500 but feel free to play around with this.

First we load our initial spectrum and conjugate data.

float4 H0Data = H0Texture.Load(int3(dispatchThreadID.xy, 0));

float2 h0 = H0Data.rg;

float2 h0conj = H0Data.ba;

float kx = 2.0f * PI * (dispatchThreadID.x - OCEAN_RESOLUTION / 2.0f) / patchSize;

float ky = 2.0f * PI * (dispatchThreadID.y - OCEAN_RESOLUTION / 2.0f) / patchSize;

float k = length(float2(kx,ky));

float kRcp = rcp(k);

// Prevent NaN values

if (k < 0.0001f)

{

kRcp = 1.0f;

}

We calculate the base frequency step.

float w = 2.0f * PI / REPEAT_TIME;

We can use this value as the stepsize around the unit circle and use time as the scalar that advances the dispersion.

float dispersion = floor(sqrt(GRAVITY * k) / w) * w * time;

We now plug this dispersion into Euler’s formula which gives us $e^{i\omega t}$. Add these helper functions:

float2 EulerFormula(float x)

{

return float2(cos(x), sin(x));

}

float2 complex_multiply(float2 W, float2 B)

{

return float2(W.x * B.x - W.y * B.y,

W.x * B.y + W.y * B.x);

}

float2 exponent = EulerFormula(dispersion);

Now we have all the components to calculate $\tilde{h}(\mathbf{k}, t)$

float2 htilde = complex_multiply(h0, exponent) + complex_multiply(h0conj, float2(exponent.x, -exponent.y));

We now successfully computed the heightfield in the frequency domain. Next to this, we also want to have the horizontal displacement and the surface slope. The horizontal displacement will make the waves look choppy and the slopes are used to calculate the surface normals which we will be using in Chapter 2 for lighting calculations.

From the animated spectrum $\tilde{h}(\mathbf{k}, t)$ we can derive everything we need in one pass. Rather than running separate FFTs for height, horizontal displacement and surface slopes, we compute all of them in the frequency domain before the FFT using a property of Fourier transforms: taking a spatial derivative in the real world is equivalent to multiplying by $ik$ in the frequency domain.

First we precompute $i\tilde{h}$, which is just the complex number rotated 90 degrees:

float2 ih = float2(-htilde.y, htilde.x);

Then we derive each output signal:

// the raw height field, becomes vertical displacement after the FFT

float2 displacementY = htilde;

// horizontal displacement in x and z, pointing in the wave travel direction

float2 displacementX = ih * kx * kRcp;

float2 displacementZ = ih * ky * kRcp;

// the surface slopes in x and z, used to compute normals for lighting

float2 displacementY_dx = ih * kx;

float2 displacementY_dz = ih * ky;

// second order derivatives used to compute the Jacobian determinant for foam detection. Covered in Chapter 3.

float2 displacementZ_dx = -htilde * kx * ky * kRcp;

float2 displacementZ_dz = -htilde * ky * ky * kRcp;

float2 displacementX_dx = -htilde * kx * kx * kRcp;

The negative sign on the second order derivatives comes from applying the $ik$ multiplication twice: $i^2 = -1$, so $ik \cdot ik = -k^2$.

All eight signals are then packed into two float4 textures and converted to the spatial domain by the FFT in the next pass.

float2 htildeDisplacementX = float2(displacementX.x - displacementZ.y, displacementX.y + displacementZ.x);

float2 htildeDisplacementZ = float2(displacementY.x - displacementZ_dx.y, displacementY.y + displacementZ_dx.x);

float2 htildeSlopeX = float2(displacementY_dx.x - displacementY_dz.y, displacementY_dx.y + displacementY_dz.x);

float2 htildeSlopeZ = float2(displacementX_dx.x - displacementZ_dz.y, displacementX_dx.y + displacementZ_dz.x);

displacementTexture[dispatchThreadID.xy] = float4(htildeDisplacementX, htildeDisplacementZ);

slopeTexture[dispatchThreadID.xy] = float4(htildeSlopeX, htildeSlopeZ);

With this, we now have the heightfield, horizontal displacement and slopes, all calculated in the frequency domain. The last step is to transform this data to the spatial domain.

1.5 The Butterfly FFT

Understanding the FFT

The ocean simulation depends on converting a frequency domain wave spectrum into real displacement values every frame. That conversion is an Inverse Fast Fourier Transform. In this section I will explain the idea behind this transformation and how it works. To not make this article too long/complex, I will not go too much into detail since understanding it fully is not required to make it work. If you are brave enough you can dive deeper and read the paper by Flugge and Matusiak.

The DFT: correct but slow

The Discrete Fourier Transform is defined as: $$F[k] = \sum_{n=0}^{N-1} f[n] \cdot e^{-i \frac{2\pi}{N} kn}$$ For each output frequency $k$, you multiply every input sample $f[n]$ by a complex rotation factor and sum the results. If you have $N$ samples and $N$ output frequencies, that is $N$ multiplications per output and $N$ outputs. A total of $N^2$ operations. For $N = 512$ that is 262,144 complex multiplications per row, per column, per frame. That is not viable in real time. The FFT computes the exact same result in $O(N \log N)$ time. For $N = 512$ that is roughly 4,608 operations. The result is identical. ***The key insight: reuse*** Consider $N = 4$. Since $e^{-i\pi/2} = -i$, the twiddle factor simplifies to $(-i)^{kn}$, and the four outputs expand to: $$F[0] = f[0] + f[1] + f[2] + f[3]$$ $$F[1] = f[0] - if[1] - f[2] + if[3]$$ $$F[2] = f[0] - f[1] + f[2] - f[3]$$ $$F[3] = f[0] + if[1] - f[2] - if[3]$$ Look at $F[0]$ and $F[2]$. Both contain $f[0] + f[2]$ and $f[1] + f[3]$, just combined differently. Look at $F[1]$ and $F[3]$. Both contain $f[0] - f[2]$ and $f[1] - f[3]$. The naive DFT computes each output independently, so it recomputes these components four separate times. The FFT computes them once and reuses them across all outputs. This is the entire idea.The butterfly

The reusable building block is called a butterfly. Given two complex numbers $a$ and $b$ and a twiddle factor $W$, one butterfly produces: $$A = a + W \cdot b$$ $$B = a - W \cdot b$$ One addition and one subtraction, both reading from the same two inputs. For general $N$ the twiddle factor is: $$W_N^k = e^{-i \frac{2\pi}{N} k}$$ This is a point on the unit circle in the complex plane, a rotation by $k$ steps of $\frac{2\pi}{N}$ radians. In the shader this is computed as:

float angle = -2.0f * PI * float(w) / float(TOTALPOINTS);

float2 twiddle = float2(cos(angle), -sin(angle));

The Stages

For $N = 4$ the FFT runs in two stages: **Stage 1** runs two butterflies, one on $f[0], f[2]$ and one on $f[1], f[3]$. This produces four intermediate values. **Stage 2** feeds those intermediate values into two more butterflies to produce the final outputs $F[0], F[1], F[2], F[3]$. For general $N$ there are $\log_2 N$ stages, and each stage runs $N/2$ butterflies. Total work is therefore: $$\frac{N}{2} \times \log_2 N$$ which is $O(N \log N)$. In the shader this is the outer loop:

for (uint stage = 0; stage < log2(TOTALPOINTS); ++stage)

Ping pong buffers

Each stage reads from the previous stage's output and writes its results somewhere else, you cannot read and write the same memory simultaneously on the GPU. The shader handles this with a double buffer and a flag that alternates each stage:

groupshared float2 bufferRG[2][TOTALPOINTS];

groupshared float2 bufferBA[2][TOTALPOINTS];

uint flag = 0;

for (uint stage = 0; stage < log2(TOTALPOINTS); ++stage)

{

bufferRG[1 - flag][groupIndex] = bufferRG[flag][i] + complex_multiply(twiddle, bufferRG[flag][i + b]);

flag = 1 - flag;

GroupMemoryBarrierWithGroupSync();

}

Bit Reversal

For the butterfly stages to chain correctly, the input samples need to be fed in a specific order before stage 0. That order is bit reversal of the index, where the binary representation of each index is reversed and samples are shuffled accordingly. Index 1 (`001`) swaps with index 4 (`100`), for example. This reordering is a known property of the Cooley-Tukey Radix-2 algorithm, and the paper handles it as a separate permutation pass before the FFT runs. Garrett Gunnell takes a slightly different approach and skips the separate permutation pass entirely. Instead, the bit reversal is folded into the butterfly index arithmetic directly. At each stage, each thread computes the correct source indices on the fly:

uint b = TOTALPOINTS >> (stage + 1);

uint w = b * (groupIndex / b);

uint i = (w + groupIndex) % TOTALPOINTS;

2 Passes: Rows then columns

A 2D FFT on an $N \times N$ texture is separable. You run the 1D FFT on every row, then run the 1D FFT on every column of the result. The shader handles both passes with a single `columnPass` constant:

if (columnPass)

data = outputTexture.Load(int3(groupID.x, groupIndex, 0));

else

data = outputTexture.Load(int3(groupIndex, groupID.x, 0));

The complete FFT shader

groupshared float2 bufferRG[2][TOTALPOINTS];

groupshared float2 bufferBA[2][TOTALPOINTS];

[numthreads(TOTALPOINTS, 1, 1)]

void main(uint3 dispatchThreadID : SV_DispatchThreadID, uint3 groupID : SV_GroupID, uint groupIndex : SV_GroupIndex)

{

float4 data;

if (columnPass)

data = outputTexture.Load(int3(groupID.x, groupIndex, 0)); // column: x fixed, y varies

else

data = outputTexture.Load(int3(groupIndex, groupID.x, 0)); // row: x varies, y fixed

bufferRG[0][groupIndex] = data.rg;

bufferBA[0][groupIndex] = data.ba;

GroupMemoryBarrierWithGroupSync();

uint flag = 0;

for (uint stage = 0; stage < log2(TOTALPOINTS); ++stage)

{

uint b = TOTALPOINTS >> (stage + 1);

uint w = b * (groupIndex / b);

uint i = (w + groupIndex) % TOTALPOINTS;

float angle = -2.0f * PI * float(w) / float(TOTALPOINTS);

float2 twiddle = float2(cos(angle), -sin(angle));

bufferRG[1 - flag][groupIndex] = bufferRG[flag][i] + complex_multiply(twiddle, bufferRG[flag][i + b]);

bufferBA[1 - flag][groupIndex] = bufferBA[flag][i] + complex_multiply(twiddle, bufferBA[flag][i + b]);

flag = 1 - flag;

GroupMemoryBarrierWithGroupSync();

}

float2 resultRG = bufferRG[flag][groupIndex];

float2 resultBA = bufferBA[flag][groupIndex];

if (columnPass)

outputTexture[int2(groupID.x, groupIndex)] = float4(resultRG, resultBA);

else

outputTexture[int2(groupIndex, groupID.x)] = float4(resultRG, resultBA);

}

Putting all this together, after both passes complete we have converted our wave spectrum from the frequency domain into real spatial data. Before we can use this data to displace our vertices, we need to apply one last step to correctly arrange it.

Permuting the FFT Output

Before we can use the FFT results, we need to apply two final steps: centering the output and unpacking our signals.

Centering the spectrum

The raw FFT output places the zero frequency component in the corner of the texture. For our ocean patch this means the waves are arranged awkwardly, with the lowest frequencies split across opposite corners. We fix this by the following permute function:

float4 Permute(float4 data, float3 id)

{

return data * (1.0f - 2.0f * ((id.x + id.y) % 2));

}

This flips the sign of every other texel in a checkerboard pattern, which has the effect of shifting the zero frequency component from the corner to the center. After this step the wave frequencies are arranged symmetrically, which is what we need for a correct tileable patch.

Unpacking the signals

Back in the wave animation pass we packed multiple signals into two float4 textures to save GPU passes. Now we unpack them:

float2 dxdz = htildeDisplacement.rg; // horizontal displacement in X and Z

float2 dydxz = htildeDisplacement.ba; // vertical displacement Y, and the Dxz cross derivative

float2 dyxdyz = htildeSlope.rg; // surface slopes in X and Z, used for normals

float2 dxxdzz = htildeSlope.ba; // second derivatives Dxx and Dzz, used for the Jacobian (Expanded upon in Chapter 3)

Applying choppiness

The horizontal displacements are scaled by a factor called Lambda before being applied to the mesh. Lambda controls how choppy the waves look. A value of 0 gives smooth rolling swells, higher values push the wave crests forward to create the sharp peaked shapes you see on a real ocean surface.

float3 displacement = float3(Lambda.x * dxdz.x, dydxz.x, Lambda.y * dxdz.y);

The slopes used for normal calculation get a Lambda correction too. In areas of strong horizontal displacement the surface is being stretched and compressed, which would cause the normal map to produce incorrect results without accounting for it:

float2 slopes = dyxdyz.xy / (1 + abs(dxxdzz * Lambda));

Finally we store this data in their corresponding textures:

slopeTexture[dispatchThreadID.xy] = float4(slopes, 0.0f, 1.0f);

displacementTexture[dispatchThreadID.xy] = float4(displacement, 0.0f);

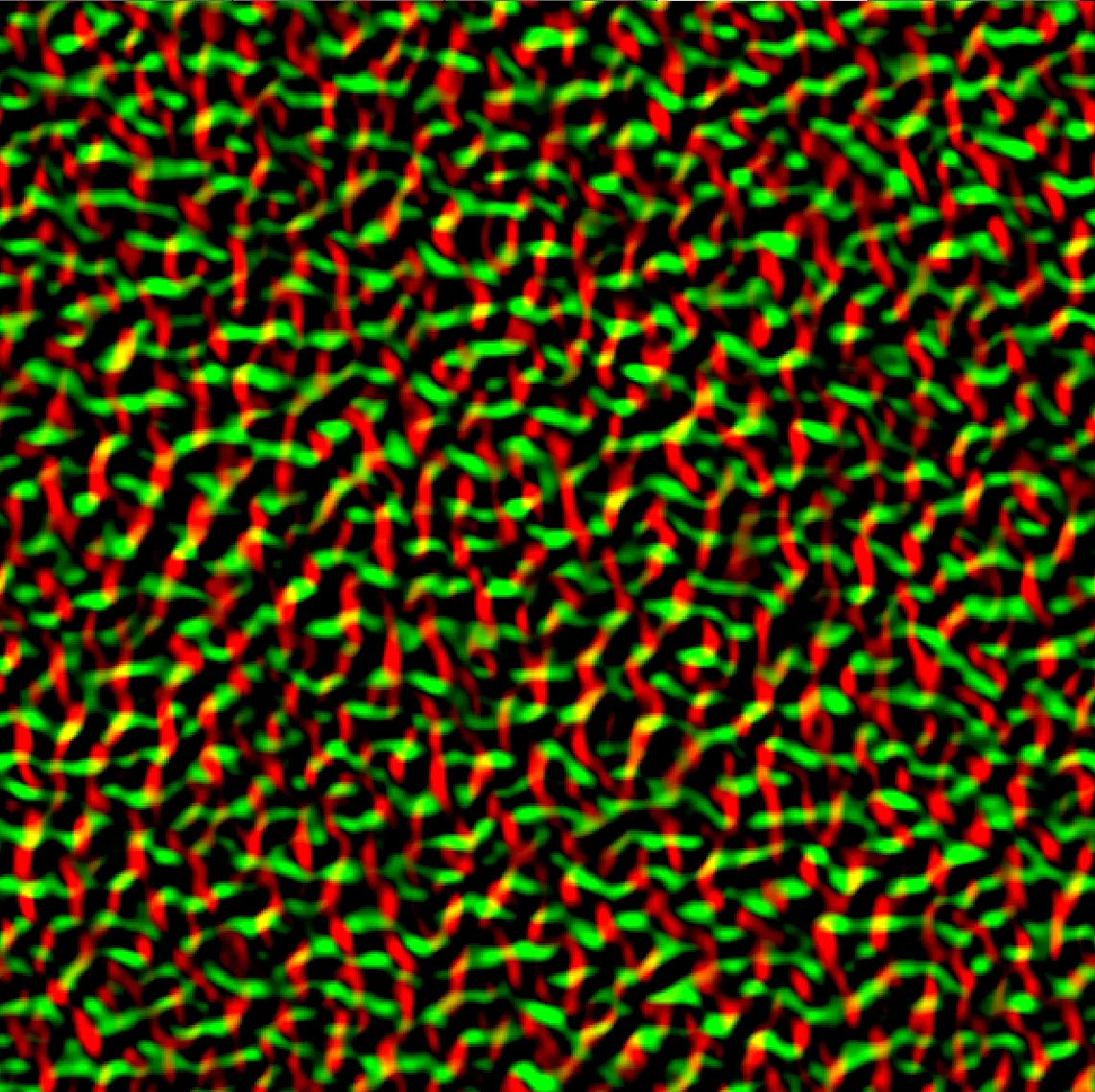

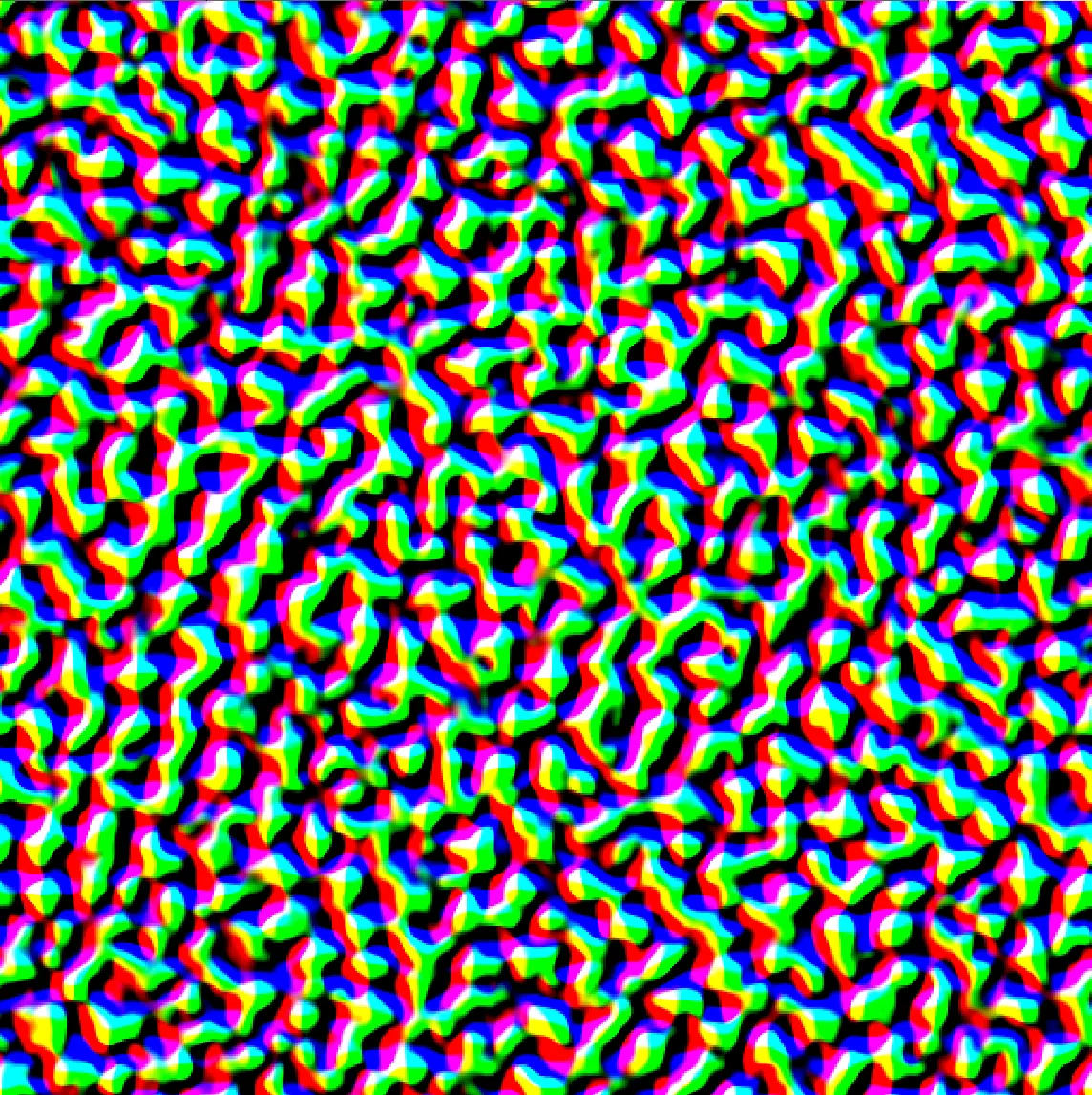

With this, the hard part is behind us. Take a look at the contents of the texture, it should look something like this:

You’ve succesfully created the slope and displacement textures! In the next chapter I will show you how to use these textures to render the Ocean.

Chapter 2 Rendering & Shading

2.1 Vertex Displacement

At this point we have two textures coming out of the permute pass: a displacement texture and a slope texture. The displacement texture holds the XYZ offset to apply to each vertex. The slope texture holds the surface derivatives we need to reconstruct the surface normal in the pixel shader.

The ocean mesh is just a flat grid of vertices. In the vertex shader, each vertex samples the displacement texture and adds the result to its position. The UV coordinates are derived from world-space position divided by the patch size.

float2 uv = data.Position.xz / patchSize;

float3 displacement = DisplacementTexture.SampleLevel(linearWrapSampler, uv, 0).rgb;

displacedPosition += displacement;

Make sure you use a WRAP sampler here, not CLAMP. With CLAMP, every vertex outside the [0,1] UV range samples the border value and you end up with a completely flat ocean.

2.2 Normal Reconstruction & Shading

In the pixel shader, sample the slope texture using the same world-space UV. Reconstructing the surface normal from the slopes is a single line:

float2 uv = IN.PositionWS.xz / patchSize;

float4 slope = SlopeTexture.Sample(anisotropicSampler, uv);

float3 normal = normalize(float3(-slope.x, 1.0f, -slope.y));

This gives you a smooth normal that captures all the surface detail baked into the slope texture. From here you can use this normal however you like, whether that is a simple diffuse and specular shading model or a full PBR setup. My implementation uses PBR with GGX specular, Image Based Lighting and a subsurface scattering approximation at wave crests, all of which I may cover in a future post.

If everything is wired up correctly you should have a shaded animated ocean.

References

- Tessendorf, J. (2001). Simulating Ocean Water. SIGGRAPH Course Notes.

- Christopher J. Horvath

- GarrettGunnell.

- gasgiant.

- Fynn-Jorin Flügge.

- Bolba, G. Ocean rendering blog post.